Deep Learning (DL) is a subset of Machine Learning that imitates the way humans gain certain types of knowledge. It is an essential element of data science, comprising statistics and predictive modeling. Besides making the collection, analysis, and interpretation of large amounts of data more efficient, it is also extremely beneficial to data scientists.

How it Works

Machines solve complex problems by learning from large amounts of data, even when the dataset is diverse, unstructured, and inter-connected. Deep Learning, likewise, is similar to how humans learn: from experience. Every time the algorithm performs a task, the machine learns and tweaks itself to improve outcomes. The more Deep Learning algorithms learn, the better they perform.

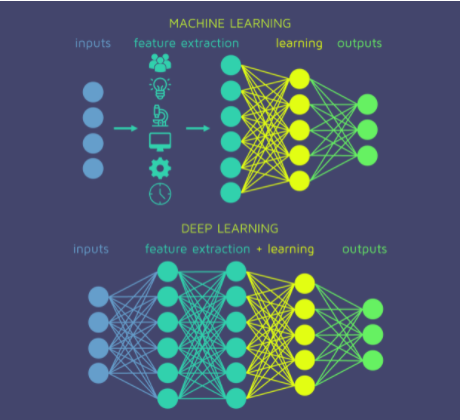

Deep Learning can also automate predictive analytics. While traditional Machine Learning algorithms are linear, Deep Learning algorithms are assembled in a complex and abstract hierarchy. It drives much of the AI applications and services that improve automation.

Likewise, to achieve an acceptable level of accuracy, Deep Learning programs require massive amounts of training data and processing power. Huge amounts of training data and the power to process all that data were not easily available to programmers until the era of big data and cloud computing.

Similarly, Deep Learning programming works by directly creating complex statistical models from its own iterative output. Because of this, it is able to create accurate predictive models from large quantities of unlabeled, unstructured data.

To boot, Deep Learning is behind most of the products and services we presently use every day, such as digital assistants, voice-enabled remotes, and credit-card fraud detection, among others. Emerging technologies, such as self-driving cars, also use Deep Learning.

Furthermore, as the Internet of Things (IoT) continues to become more pervasive, most of the data humans and machines create are unstructured and not labeled, but Deep Learning is able to create precise predictive models despite that.

Deep Learning Methods

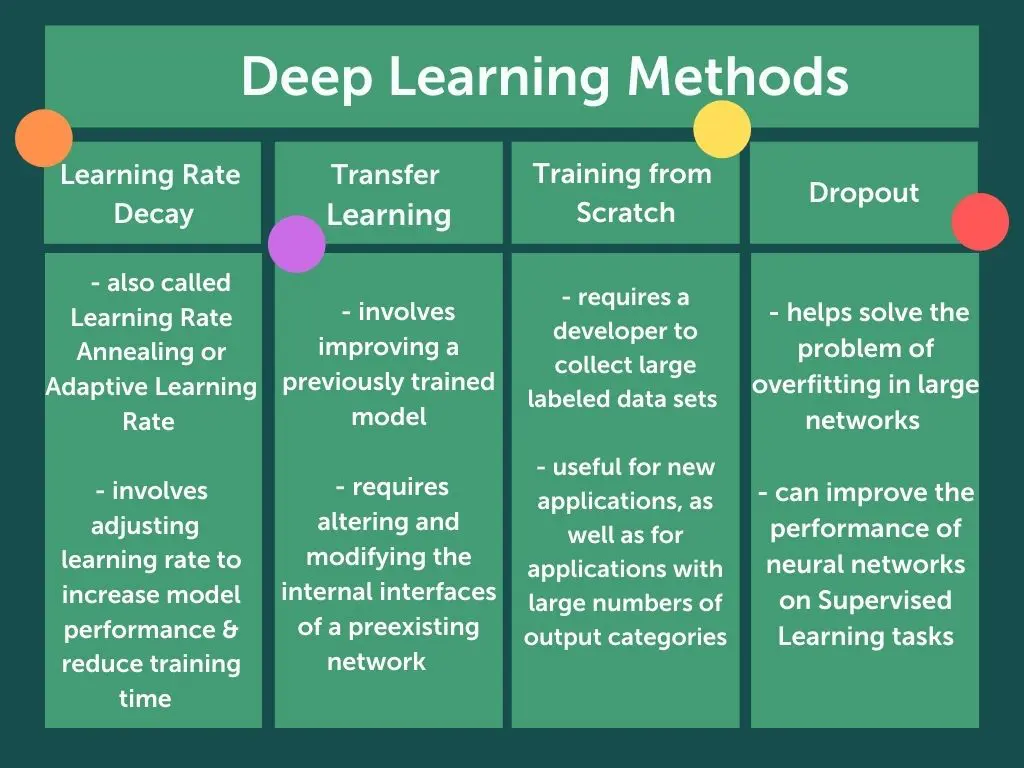

Deep Learning models can be created using various methods. These techniques include Learning Rate Decay, Transfer Learning, Training from Scratch, and Dropout.

Learning Rate Decay

The Learning Rate Decay method, also called Learning Rate Annealing or Adaptive Learning Rate, is the process of adjusting the learning rate to increase model performance and reduce training time. The “learning rate” is the factor that sets the conditions for the model’s operation prior to the learning process. Thus, how much change a model experiences are controlled by this parameter.

As such, Learning Rates that are too high may result in unstable training processes. On the other hand, Learning Rates that are too small may result in a lengthy training process that may get stuck. The most optimal adaptation of Learning Rate during model training is using techniques that reduce the learning rate over time.

Transfer Learning

The Transfer Learning process involves improving a previously trained model. This process requires altering and modifying the internal interfaces of a preexisting network. Engineers and programmers first feed the existing network new data that contains previously unknown classifications.

Once the programmers adjust the network, the model can perform new tasks with more specific categorizing abilities. An advantage of this method is that it requires much less data, and the computation time is reduced to hours, or even minutes.

Training from Scratch

Training from Scratch requires a developer to collect large labeled data sets and configure a network architecture from the ground up. This technique is useful for new applications, as well as for applications with large numbers of output categories. However, this approach is less common as it requires an excessive amount of data.

Dropout

The Dropout method helps solve the problem of overfitting in large networks that operate with many parameters. It does so by randomly dropping unit connections from the neural network during training.

The Dropout method can improve the performance of neural networks on Supervised Learning tasks in areas such as classification of documents, speech recognition, and computational biology.

Examples

Since Deep Learning models process information similar to the human brain, they are applied to many tasks done by humans. The most common examples of programs and software using Deep Learning include image recognition tools, language translations, Natural Language Processing (NLP), medical diagnosis, speech recognition software, stock market trading signals, and network security. These tools have a wide array of applications as well, such as self-driving cars and language translation services.

As a matter of fact, Deep Learning applications are so well-integrated into our daily lives through the products and services we use every day that we don’t even take notice or think about the backend where the complex data processing occurs.

Some popular Deep Learning examples include:

Financial Services

Many financial institutions use Deep Learning’s predictive analytics to conduct algorithmic stock trading, assess loan approval risks for businesses, detect fraud, and help manage client credit and investment portfolios.

Law Enforcement

Deep Learning algorithms can analyze transactional data and recognize dangerous patterns indicative of fraudulent or criminal activities.

In like manner, law enforcement personnel can also use DL applications, such as speech recognition, and computer vision, to improve the efficiency of an investigative analysis. Such DL models can extract patterns and evidence from images, sound and video recordings, and documents, helping law enforcement analyze large amounts of data quickly and accurately.

Customer Service

The most common example of Deep Learning in customer service is the AI Chatbot, used in a variety of applications and customer service portals. While traditional chatbots use natural language and some visual recognition, like those commonly found in call centers, more sophisticated chatbots are able to determine multiple responses to ambiguous questions through learning. Some virtual assistants include Siri, Alexa, and Google Assistant.

Healthcare

The digitization of hospital records and images has immensely benefitted the healthcare industry. Image recognition applications that run on Deep Learning support medical imaging specialists and radiologists, helping them analyze images in less time. Likewise, they also aid in medical research. Cancer researchers can use Deep Learning models to automatically detect cancer cells, for example.

Text Generation

Deep Learning software, such as Grammarly, is programmed to recognize the grammar and style of text to then be used to automatically create completely new text that matches the proper spelling, grammar, and style of the original text.

Aerospace and Military

Deep Learning models, such as Custom CNN, are used to detect objects from satellites. These models identify areas of interest, as well as safe or unsafe zones.

Industrial Automation

Machine models operating on Deep Learning algorithms help improve worker safety in environments like factories and warehouses by automatically detecting when a worker or object is getting too close to a machine.

Adding Color

Black-and-white photos and videos can now have color added to them through Deep Learning models. In the past, this was an extremely time-consuming manual process.

Computer Vision

Deep Learning has enhanced computer vision immensely, helping computers achieve extreme accuracy for image classification, object detection, and segmentation.

Deep Learning vs Machine Learning

While Deep Learning is a subset of Machine Learning, it differentiates itself from ML through the way it solves problems, by the type of data that it works with, and the methods in which it learns.

| Deep Learning | Machine Learning |

| DL understands features incrementally, eliminating the need for domain expertise | ML, on the other hand, requires a domain expert to identify the most applied features |

| DL Algorithms take much longer to train | On the contrary, ML Algorithms only need a few seconds to a few hours of training |

| DL Algorithms take much less time to run tests | The test time for ML algorithms, however, increases along with the size of the data |

| High-end machines and high-performing GPUs are required | Does not require high-end costly machines |

| Deep Learning is preferable for large amounts of data | ML Algorithms instead, is preferable for small data |

| DL can ingest and process unstructured data, removing some of the human dependency | On the flip side though, ML leverages structured, labeled data to make predictions |

Read more about how AI, leveraging ML and DL algorithms, helps modern businesses in this blog: 10 Benefits and Applications of AI in Business.

The Fuse.ai center is an AI research and training center that offers blended AI courses through its proprietary Fuse.ai platform. Likewise, the proprietary curriculum includes courses in Machine Learning, Deep Learning, Natural Language Processing, and Computer Vision. Certifications like these help engineers become leading AI industry experts, aiding them in achieving a fulfilling and ever-growing career in the field.